Testing Authentication with Playwright: The Complete Guide

Learn how to handle secrets, OAuth, MFA, magic links, and parallel CI without flaky runs.

Most Playwright test suites start with a login helper, a few stored credentials, and auth that works. At 20 or 30 tests, it holds up. Past 100, the auth setup that worked fine at 30 starts breaking in ways that are hard to trace.

Authentication touches every test that involves a user session. When it breaks, the damage spreads. The problem appears once tests run in parallel: multiple workers reuse the same session state, tokens expire during long pipeline runs, and test helpers introduce race conditions that pass locally but fail in CI. If each login takes 5 to 15 seconds through the UI and you're running 100 tests without cached auth, that's 8 to 25 minutes of pure login overhead per run.

MFA is now the standard, with 83% of organizations requiring it and adoption reaching 87% among larger companies. Combined with SSO, magic links, and multi-tenant flows, authentication requires a test architecture that won't collapse as the suite grows.

What this guide covers:

- Why Authentication Testing Is Different

- The Credential Anti-Patterns You'll Hit at Scale

- Authentication State Architecture at Scale

- Multi-User Testing with Multiple Roles

- Testing OAuth Flows Reliably

- Magic Link Testing

- SSO and Multi-Tenant Auth

- MFA and Session Management

- Debugging Auth Test Failures

- Integration with CI/CD and Secrets Management

- Compliance and Security

- Observability

Why Authentication Testing Is Different

Auth state affects every part of the system. A session cookie, an OAuth token, or a storageState file shapes what pages render, what API calls succeed, and what other tests see when they run in parallel.

Playwright's browser context isolation gives each test its own cookies, localStorage, and cache. But it does not isolate server-side state. Two tests running in separate contexts but sharing the same user account can still interfere with each other. That's what makes auth testing harder than it looks.

OAuth providers, SSO systems, and email-based magic links add dependencies your test suite can't control. A rate limit from an identity provider, a delayed token exchange, or a SAML redirect that behaves differently in CI than locally will break a test that otherwise works fine. And credentials carry a different weight than regular test data. A leaked storageState file or a hardcoded token isn't a test problem; it's a security incident. More on this in the Compliance and Security section.

The Credential Anti-Patterns You'll Hit at Scale

In many test suites, credentials live in a .env file, a single test user handles everything, and the suite runs in sequence. That works at 20 tests. At 100, failures appear that trace back to decisions that made sense when the suite was small.

Anti-Pattern 1: The Single Shared Test User

A single email and password used across every test works fine for sequential runs. The problem hits the moment you run in parallel. Six workers sharing one account means your tests fight over the same session state. One test changes a role permission, another reads stale state, and failures appear in places that have nothing to do with the code under test.

One account per worker is the minimum. If your app mutates server-side state per user (role changes, settings, preferences), you need one account per test. A user factory that provisions isolated accounts at the start of each worker removes these conflicts at the source.

Playwright's parallel execution model means this isn't optional. A password change, an account lockout, or an MFA prompt on the shared user mid-run takes down the entire suite.

Anti-Pattern 2: Credentials Hardcoded in Git

const testUser = { email: 'test@example.com', password: 'password123' }

Lines like this appear in more repositories than you'd expect. They start as a convenience during local development and become permanent. GitGuardian's 2025 State of Secrets Sprawl report found 23.8 million secrets leaked on public GitHub repositories in 2024 alone (25% year-over-year increase), with 70% of secrets from 2022 still active. Private repos are worse: 35% contain at least one plaintext credential.

Anti-Pattern 3: Globally Shared Auth Tokens

Storing a token in a global variable after the first test authenticates and having every following test read from it looks like a performance win. In practice, it creates a hidden dependency chain across the entire suite. When that token expires mid-run, all downstream tests fail. Logout events and session invalidation make the failures random.

Scope auth state per worker or per test context. Worker-scoped fixtures using testInfo.parallelIndex provision unique storage state files per worker, removing the shared token dependency entirely. We'll show the code for this below.

Anti-Pattern 4: Mocking OAuth Without Real Integration

Mocking OAuth at the feature test layer is the right call for speed. The problem is when mocking is the only coverage. The actual redirect flow, token exchange, and callback handling never get tested, and those are exactly where production bugs appear. A misconfigured redirect URI, a missing state parameter check, or a broken PKCE implementation stays invisible in a mocked environment and shows up when a real user logs in.

Anti-Pattern 5: Testing Only the Happy Path

Only testing successful logins while ignoring invalid credentials, account lockouts, expired MFA codes, or failed SSO assertions leaves you blind to failures that actually happen in production. Weak lockout logic and missing error redirects are security issues that surface when real users hit them.

Anti-Pattern 6: Timing-Dependent Auth Checks

Using page.waitForTimeout() after login instead of waiting for a specific signal (URL change, visible element, API response) is one of the most common sources of auth flakiness. Playwright's auto-waiting handles most of this, but auth flows often involve redirects across multiple URLs, and those need explicit waits for the final destination.

Anti-Pattern 7: Leftover Sessions Between Runs

If sessions persist in the database or cache after a test finishes, they affect the starting state of the next run. Explicit teardown (clearing sessions and removing test users) after each run keeps the environment consistent. Writing auth files under testProject.outputDir, which Playwright automatically cleans before each run, removes this problem entirely.

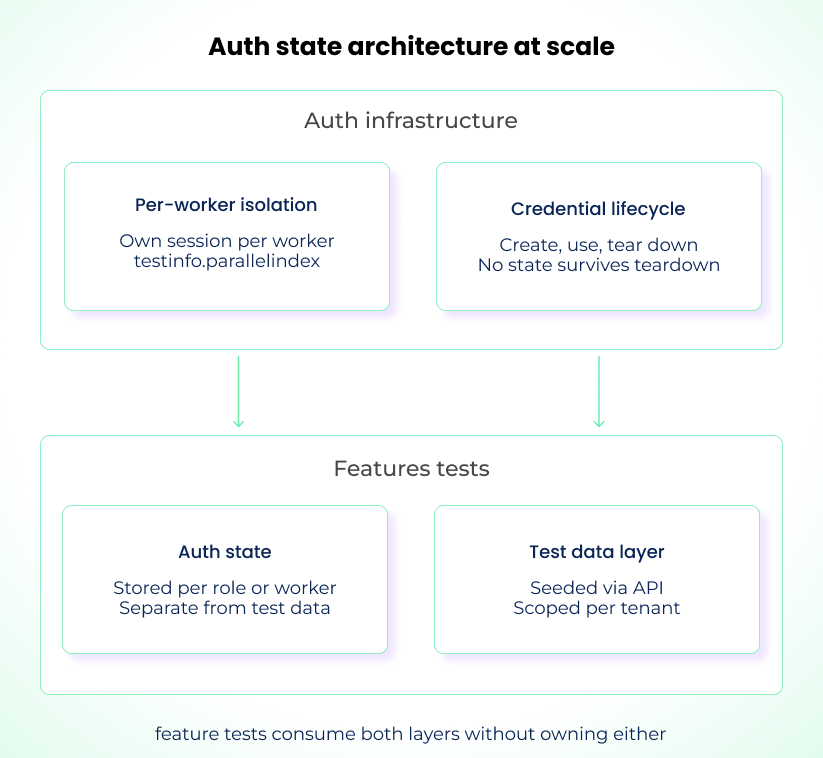

Authentication State Architecture at Scale

When your suite grows past 100 tests, the first thing that breaks is the authentication setup. Most suites start with a single setup file that logs in once and saves a storageState file, and every test project loads that file. That works until tests start modifying server-side state, running across multiple parallel workers, or operating across different user roles.

Treat authentication as infrastructure, not setup boilerplate.

The storageState Setup Project

The foundation is Playwright's setup project pattern. A dedicated setup file logs in once and saves the browser state. Every test project declares that setup as a dependency and loads the saved state.

// tests/auth.setup.ts

import { test as setup, expect } from "@playwright/test";

const authFile = "playwright/.auth/user.json";

setup("authenticate", async ({ page }) => {

await page.goto("/login");

await page.getByLabel("Email").fill(process.env.TEST_USER_EMAIL);

await page.getByLabel("Password").fill(process.env.TEST_USER_PASSWORD);

await page.getByRole("button", { name: "Sign in" }).click();

await page.waitForURL("/dashboard");

await page.context().storageState({ path: authFile });

});

// playwright.config.ts

import { defineConfig, devices } from "@playwright/test";

export default defineConfig({

projects: [

{ name: "setup", testMatch: /.*\.setup\.ts/ },

{

name: "chromium",

use: {

...devices["Desktop Chrome"],

storageState: "playwright/.auth/user.json",

},

dependencies: ["setup"],

},

],

});

Every test starts already authenticated. The setup runs once, and all tests reuse the saved state.

Add playwright/.auth to your .gitignore. Storage state files contain session cookies and tokens. Committing them to your repo is a security incident.

Authenticate via API Instead of UI

Default to API-based authentication for your setup project. Only test login through the UI in your dedicated auth flow tests.

Playwright's request fixture can authenticate without opening a browser. This is faster than clicking through a login form and eliminates flakiness from form interactions.

// tests/auth.setup.ts

import { test as setup } from "@playwright/test";

const authFile = "playwright/.auth/user.json";

setup("authenticate via API", async ({ request }) => {

await request.post("/api/login", {

data: {

email: process.env.TEST_USER_EMAIL,

password: process.env.TEST_USER_PASSWORD,

},

});

await request.storageState({ path: authFile });

});

Use API auth for setup speed. Use UI auth only in tests that verify the login flow itself.

The Session Storage Gotcha

Check where your app stores auth tokens. storageState saves cookies and localStorage by default. If your app stores tokens in IndexedDB, pass indexedDB: true to storageState() (available since Playwright 1.51). storageState does not save sessionStorage regardless. If your app stores JWT tokens or session IDs in sessionStorage, the standard storageState pattern silently produces unauthenticated tests.

The Playwright docs document the workaround:

// After login, save session storage separately

const sessionData = await page.evaluate(() => JSON.stringify(sessionStorage));

fs.writeFileSync("playwright/.auth/session.json", sessionData, "utf-8");

// In a new context, restore it with an init script

const sessionStorage = JSON.parse(

fs.readFileSync("playwright/.auth/session.json", "utf-8"),

);

await context.addInitScript((storage) => {

if (window.location.hostname === "your-app.com") {

for (const [key, value] of Object.entries(storage))

window.sessionStorage.setItem(key, value);

}

}, sessionStorage);

If you don't know whether your app uses sessionStorage, check the Application tab in DevTools after logging in. This is the kind of thing that works fine locally (because the browser session persists) and fails silently in CI.

Per-Worker Auth Isolation

For suites where tests modify server-side state (settings, roles, permissions), a single shared auth file breaks down. The practical middle ground is one authenticated session per parallel worker.

Playwright's docs call this the "moderate" approach. Each worker gets its own account using testInfo.parallelIndex:

// playwright/fixtures.ts

import { test as baseTest, expect } from "@playwright/test";

import fs from "fs";

import path from "path";

export * from "@playwright/test";

export const test = baseTest.extend<{}, { workerStorageState: string }>({

storageState: ({ workerStorageState }, use) => use(workerStorageState),

workerStorageState: [

async ({ browser }, use) => {

const id = test.info().parallelIndex;

const fileName = path.resolve(

test.info().project.outputDir,

`.auth/${id}.json`,

);

if (fs.existsSync(fileName)) {

await use(fileName);

return;

}

const page = await browser.newPage({ storageState: undefined });

const account = await acquireAccount(id);

await page.goto("/login");

await page.getByLabel("Email").fill(account.email);

await page.getByLabel("Password").fill(account.password);

await page.getByRole("button", { name: "Sign in" }).click();

await page.waitForURL("/dashboard");

await page.context().storageState({ path: fileName });

await page.close();

await use(fileName);

},

{ scope: "worker" },

],

});

Using project.outputDir for the auth files means Playwright cleans them up automatically before each test run. No stale sessions leaking between runs.

Separate Auth Tests from Feature Tests

Auth tests verify login, logout, token expiry, MFA flows, and SSO assertions. Feature tests assume authentication works and focus on the product. Keep them in separate test suites.

When auth logic gets mixed into feature tests, failures multiply. A feature test should fail only when there's a feature problem. If auth is embedded, session expiry or token rotation triggers failures that look like product bugs. Your auth setup has its own integration points and failure modes. Give it dedicated coverage.

Multi-User Testing with Multiple Roles

Most production systems involve multiple users with different permissions. Define separate projects in playwright.config.ts, each with its own pre-authenticated storageState:

// playwright.config.ts

export default defineConfig({

projects: [

{ name: "setup", testMatch: /.*\.setup\.ts/ },

{

name: "chromium-admin",

use: {

...devices["Desktop Chrome"],

storageState: "playwright/.auth/admin.json",

},

dependencies: ["setup"],

},

{

name: "chromium-viewer",

use: {

...devices["Desktop Chrome"],

storageState: "playwright/.auth/viewer.json",

},

dependencies: ["setup"],

},

],

});

For tests that need multiple roles interacting in the same test (admin publishes content, viewer sees it), use separate browser contexts:

test("admin changes are visible to viewer", async ({ browser }) => {

const adminContext = await browser.newContext({

storageState: "playwright/.auth/admin.json",

});

const viewerContext = await browser.newContext({

storageState: "playwright/.auth/viewer.json",

});

const adminPage = await adminContext.newPage();

const viewerPage = await viewerContext.newPage();

await adminPage.goto("/admin/announcements");

await adminPage.getByLabel("Title").fill("Maintenance Window");

await adminPage.getByRole("button", { name: "Publish" }).click();

await expect(adminPage.locator(".status")).toHaveText("Published");

await viewerPage.goto("/dashboard");

await expect(viewerPage.locator(".announcement")).toContainText(

"Maintenance Window",

);

await adminContext.close();

await viewerContext.close();

});

Test both sides of every permission: admin can access the dashboard, and viewer gets blocked from admin routes. Role changes should remove access immediately. Tenant A cannot see Tenant B's data. Every permission rule needs a test that confirms it works under parallel execution.

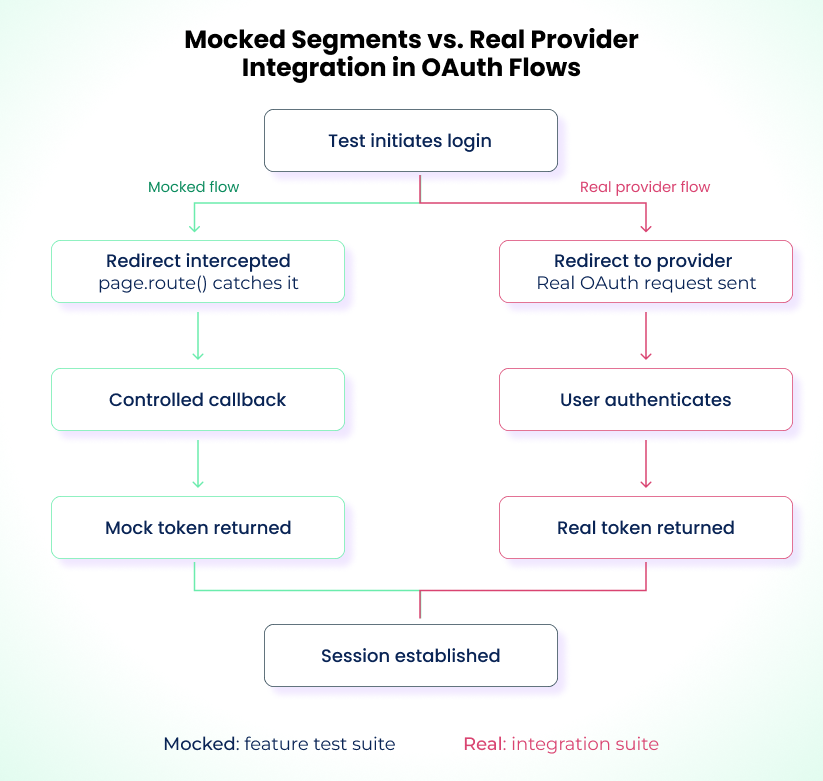

Testing OAuth Flows Reliably

OAuth flows are the hardest auth pattern to test. The redirect chain involves multiple hops across domains, server-side token exchanges, and external providers with their own rate limits, bot detection, and UI changes. Automating every test through a real OAuth provider leads to flaky, slow pipelines.

Mock OAuth for every feature test. No exceptions. Then run real provider tests on a schedule, not in your PR gate.

Mock OAuth for Feature Tests

Use page.route() to intercept the redirect to the OAuth provider and send the browser straight back to your callback URL with a mock authorization code:

test("dashboard loads after OAuth login", async ({ page }) => {

await page.route("https://accounts.google.com/**", async (route) => {

const url = new URL(route.request().url());

const state = url.searchParams.get("state");

const redirectUri = url.searchParams.get("redirect_uri");

await route.fulfill({

status: 302,

headers: {

Location: `${redirectUri}?code=mock-auth-code&state=${state}`,

},

});

});

await page.goto("/login");

await page.getByRole("button", { name: "Continue with Google" }).click();

await expect(page).toHaveURL("/dashboard");

});

This intercepts the browser-side redirect. Your backend still needs to handle the mock authorization code. The simplest approach is a test-mode bypass in your token exchange endpoint that accepts any code and returns a valid session. If you don't want to modify your backend, the oauth2-mock-server npm package runs a local OAuth server with configurable token responses. Point your app's OAuth config at it during tests.

The state parameter matters. OAuth uses it to prevent CSRF. Your mock must echo back the exact state value from the original redirect, or the app will reject the callback.

Test Real OAuth Separately

Mocks confirm the app behaves correctly given the right inputs. They don't catch integration problems. A misconfigured redirect URI, a broken PKCE implementation, or an invalid scope request passes every mocked test and fails in production.

Run a small suite of dedicated integration tests against real OAuth outside the main CI gate. A scheduled nightly run catches these failures without blocking pull requests. Use dedicated provider test tenants for these runs: a Google Workspace test org, an Azure AD sandbox tenant, or an Okta preview org. Running against shared corporate IdPs leads to throttling and permission conflicts that create their own flakiness.

Mocking PKCE Flows

If your app uses PKCE (required for public clients like SPAs), the mock needs to handle the code_verifier/code_challenge exchange. During the authorization redirect, the app sends a code_challenge derived from a random code_verifier. When exchanging the authorization code for a token, the app sends the original code_verifier, and the server verifies it matches the challenge.

Your page.route() mock doesn't need to validate PKCE itself because it's bypassing the provider entirely. But your backend's token exchange endpoint does. If your backend validates the code_verifier against the code_challenge, the test-mode bypass needs to skip that check too, or the token exchange will fail even though the browser-side mock worked.

When using oauth2-mock-server, PKCE is handled automatically: it supports the Authorization Code grant with PKCE out of the box. Point your app's OAuth config at it and the full code_challenge/code_verifier cycle runs against a real (local) server.

The important thing: your scheduled real-provider tests (not the mocked ones) are what catch broken PKCE implementations. A wrong hash algorithm, a missing code_challenge_method=S256 parameter, or a code_verifier that doesn't meet the 43-128 character requirement all pass in mocked environments and fail in production.

When OAuth Mocking Breaks

If your mock returns a valid-looking callback but the app rejects it, debug it in layers.

Open the Playwright trace and go to the Network tab. Look for the request to your callback URL (/auth/callback or similar). Check whether the state query parameter matches the one from the original redirect to the OAuth provider. If it doesn't, your mock is generating or echoing the value incorrectly.

Next, compare the redirect_uri parameter in the intercepted request against what's registered in your OAuth provider's app configuration. A mismatch here (trailing slash, http vs https, port number) causes a silent rejection. The provider returns an error, but your app often just redirects to a login page without surfacing the cause.

Finally, check your backend logs for the token exchange step. If the browser-side redirect worked but the user still isn't authenticated, the backend likely tried to exchange the mock authorization code with the real provider and got a 400 or 401 back. Your test environment needs to either skip the token exchange or point it at a local mock server.

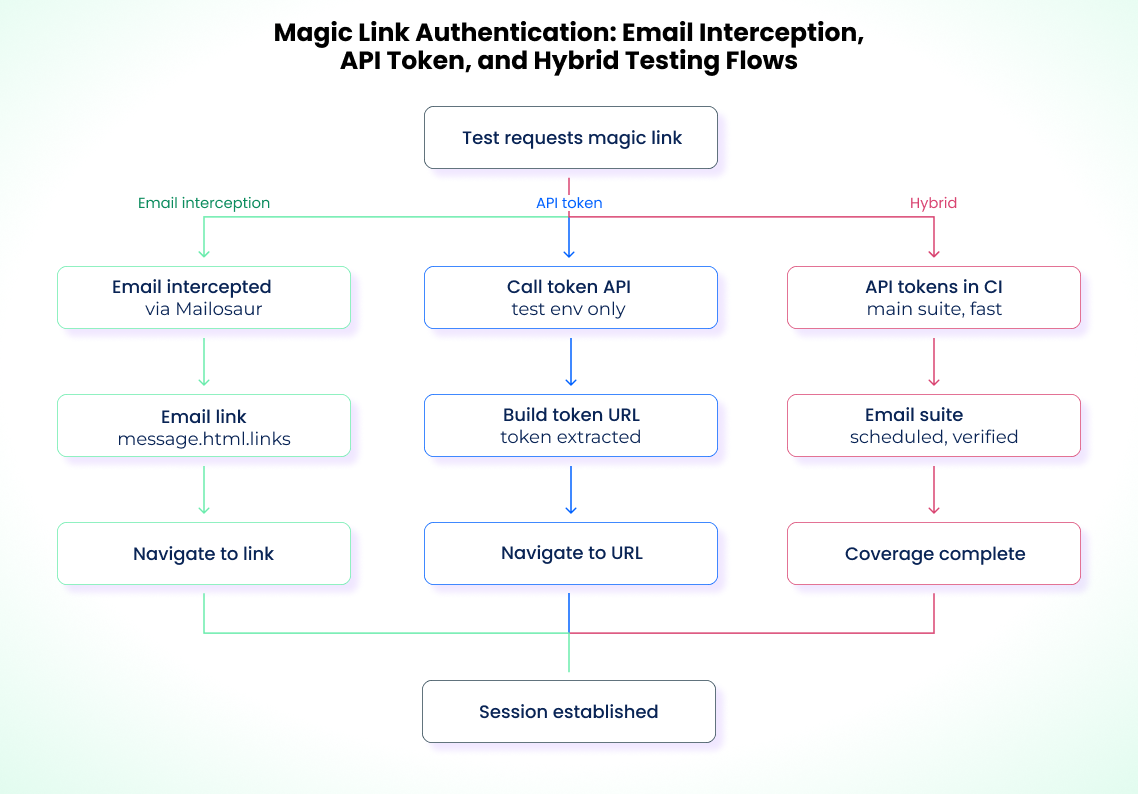

Magic Link Testing

If your app uses magic links, expose a test-only token endpoint in staging. Magic links live in email, and standard form-based test patterns can't reach them. Routing every test through real email delivery is a reliability problem you don't need.

API-Based Token Generation (Use This for Feature Tests)

If your application exposes a backend endpoint that issues magic link tokens, tests can call that endpoint directly, construct the URL, and navigate:

test("magic link logs in the user", async ({ page, request }) => {

const response = await request.post("/api/test/magic-link", {

data: { email: "testuser@example.com" },

});

const { token } = await response.json();

await page.goto(`/auth/verify?token=${token}`);

await expect(page).toHaveURL("/dashboard");

await expect(page.getByText("Welcome")).toBeVisible();

});

That endpoint must be restricted to test and staging environments. Making it available in production creates a token-generation vulnerability that bypasses the email delivery step entirely.

Email Interception for End-to-End Coverage

For tests that need to verify the full flow (email delivery, link format, click-through), tools like Mailosaur provide virtual email addresses and a queryable API. A Playwright test triggers the magic link send, polls for the email, extracts the link, and navigates to it. Email delivery adds latency and infrastructure costs, but if your product relies on magic links, you should have a small suite that covers the real flow.

Run email-based tests on a schedule, not on every commit. The main suite uses API-generated tokens for speed.

When Magic Links Break

Token expiration is where most coverage gaps hide. Magic link tokens typically expire in 5 to 15 minutes and should be single-use. Test that expired tokens show a clear error message, that reusing a token fails, and that malformed tokens don't crash the app. Error messaging when a link expires or gets reused deserves the same attention as the happy path.

SSO and Multi-Tenant Auth

SSO relies on multiple systems working together. A user starts on the login page, gets redirected to a corporate IdP, authenticates there, and returns with a SAML assertion or OIDC token that the application must verify. Each step depends on a separate system and can fail differently.

Playwright's page.route() can intercept the browser's POST to the Assertion Consumer Service (ACS) endpoint and return a controlled response. But SAML validation doesn't stop at the browser. The application validates the assertion's cryptographic signature server-side against the IdP's certificate.

Don't mock SAML by disabling signature validation. Use a test IdP. Disabling validation means you're testing a different code path than production. Keycloak in dev mode is the most practical option: it runs in a single Docker container, signs assertions with real certificates, and supports both SAML and OIDC. Spin it up alongside your app in CI and point your SP configuration at it.

# docker-compose.test.yml

services:

keycloak:

image: quay.io/keycloak/keycloak:latest

command: start-dev --import-realm

environment:

KEYCLOAK_ADMIN: admin

KEYCLOAK_ADMIN_PASSWORD: admin

ports:

- "8080:8080"

volumes:

- ./test-realm.json:/opt/keycloak/data/import/test-realm.json

Export your realm config (clients, users, roles) once and commit the JSON file. Every CI run imports the same realm, so the IdP state is reproducible. The Playwright test navigates to your app's login page, follows the redirect to Keycloak, fills the IdP form, and returns with a signed assertion:

test("SSO login through Keycloak", async ({ page }) => {

await page.goto("/login");

await page.getByRole("button", { name: "Sign in with SSO" }).click();

await page.waitForURL(/keycloak.*\/realms\//);

await page.getByLabel("Username").fill("testuser");

await page.getByLabel("Password").fill(process.env.KEYCLOAK_TEST_PASSWORD);

await page.getByRole("button", { name: "Sign In" }).click();

await page.waitForURL("/dashboard");

await expect(page.getByText("Welcome")).toBeVisible();

const cookies = await page.context().cookies();

expect(cookies.some((c) => c.name === "session")).toBeTruthy();

});

The cross-origin navigation from your app to Keycloak and back is what makes this test valuable. It exercises the real redirect chain and SAML signature validation that mocks skip.

Multi-Tenant Isolation

If you're running multi-tenant auth, treat each tenant as a separate Playwright project with its own base URL, credentials, and storage state:

// playwright.config.ts

export default defineConfig({

projects: [

{ name: "setup", testMatch: /.*\.setup\.ts/ },

{

name: "tenant-acme",

use: {

baseURL: "https://acme.your-app.com",

storageState: "playwright/.auth/acme-user.json",

},

dependencies: ["setup"],

testMatch: /.*tenant-a.*\.spec\.ts/,

},

{

name: "tenant-globex",

use: {

baseURL: "https://globex.your-app.com",

storageState: "playwright/.auth/globex-user.json",

},

dependencies: ["setup"],

testMatch: /.*tenant-b.*\.spec\.ts/,

},

],

});

For apps that resolve tenants dynamically (by header, path prefix, or login context rather than subdomain), use a fixture that injects the tenant identifier:

export const test = baseTest.extend<{ tenantId: string }>({

tenantId: ["default-tenant", { option: true }],

storageState: async ({ tenantId }, use) => {

await use(`playwright/.auth/${tenantId}.json`);

},

});

Cross-tenant isolation tests are the most important part of multi-tenant auth coverage. Every test that confirms "Tenant A can access their data" needs a counterpart that confirms "Tenant A cannot access Tenant B's data":

test("tenant user cannot access another tenant", async ({ browser }) => {

const acmeContext = await browser.newContext({

storageState: "playwright/.auth/acme-user.json",

});

const page = await acmeContext.newPage();

const response = await page.goto("https://globex.your-app.com/dashboard");

expect(response.status()).toBe(403);

await acmeContext.close();

});

This isn't just a feature requirement, it's a security boundary that deserves dedicated test coverage.

When SSO Breaks

SSO failures in CI usually come from certificate mismatches (your test IdP cert doesn't match what the app expects), clock skew (SAML assertions have a validity window, and CI servers with drifted clocks reject them), or redirect URI misconfigurations. Check the SAML assertion's NotBefore and NotOnOrAfter fields if your tests pass locally but fail in CI.

MFA and Session Management

Testing TOTP-Based MFA

Generate TOTP codes programmatically. The otpauth npm package produces valid six-digit codes from a shared secret stored as an environment variable:

import * as OTPAuth from "otpauth";

async function generateTOTP(): Promise<string> {

const totp = new OTPAuth.TOTP({

secret: OTPAuth.Secret.fromBase32(process.env.TOTP_SECRET),

digits: 6,

period: 30,

});

const now = Math.floor(Date.now() / 1000);

const remaining = 30 - (now % 30);

if (remaining < 5) {

await new Promise((r) => setTimeout(r, remaining * 1000));

}

return totp.generate();

}

The timing check matters. TOTP codes expire every 30 seconds. If you generate a code with 2 seconds left, network latency and DOM interaction time push the submission past the expiration boundary. The test fails with what looks like an auth error but is actually a timing problem. Generate the code right before submission, and wait for a fresh period if the window is too narrow. TOTP tests also become flaky if CI runner clocks drift more than one time window. Enforce NTP sync or freeze time in containerized test environments, since the same clock skew problem described in the SSO section applies here.

The shared secret is never hardcoded. It lives in CI secrets and is retrieved at runtime.

Session Behavior

Auth test suites often have the largest coverage gaps around session behavior. Many tests check that login works, but few test what happens afterward.

Test expired session cookies. Use context.addCookies() to inject a session cookie with a past expiry, then verify your app prompts re-authentication instead of silently breaking:

test("expired session redirects to login", async ({ browser }) => {

const context = await browser.newContext({

storageState: "playwright/.auth/user.json",

});

await context.addCookies([

{

name: "session",

value: "expired-token-value",

domain: "your-app.com",

path: "/",

expires: Math.floor(Date.now() / 1000) - 3600,

},

]);

const page = await context.newPage();

await page.goto("/dashboard");

await expect(page).toHaveURL(/\/login/);

await context.close();

});

Test token refresh. Intercept the refresh endpoint with page.route() to control the response. This lets you verify the app handles both successful and failed refreshes:

test("silent token refresh keeps user logged in", async ({ page }) => {

let refreshCount = 0;

await page.route("**/api/auth/refresh", async (route) => {

refreshCount++;

await route.fulfill({

status: 200,

contentType: "application/json",

body: JSON.stringify({ token: "refreshed-token", expiresIn: 3600 }),

});

});

await page.goto("/dashboard");

await page.waitForResponse("**/api/auth/refresh");

await expect(page.locator(".user-profile")).toBeVisible();

expect(refreshCount).toBeGreaterThan(0);

});

test("failed token refresh redirects to login", async ({ page }) => {

await page.route("**/api/auth/refresh", async (route) => {

await route.fulfill({ status: 401 });

});

await page.goto("/dashboard");

await expect(page).toHaveURL(/\/login/);

});

Test session invalidation. Call your API to invalidate a session server-side, then verify the next page load handles it gracefully instead of rendering a broken page:

test("invalidated session shows login prompt", async ({ page, request }) => {

await page.goto("/dashboard");

await expect(page.locator(".user-profile")).toBeVisible();

await request.post("/api/test/invalidate-session");

await page.goto("/settings");

await expect(page).toHaveURL(/\/login/);

});

These behaviors don't always surface as visible errors. Sometimes they appear as subtle regressions: a settings page that loads without the user's preferences, an API call that returns a 200 with empty data instead of a 401. Test your session boundaries, not just your login form.

Debugging Auth Test Failures

Auth failures are the hardest test failures to diagnose. A 401 response, a redirect loop, or a blank page after login all point to auth issues, but the root cause can be a missing cookie, an unconfigured CI environment variable, or a timezone difference that invalidates a TOTP code.

Configure Traces for Auth Debugging

Set up your config to retain evidence when auth fails:

// playwright.config.ts

use: {

trace: 'on-first-retry',

screenshot: 'only-on-failure',

video: 'retain-on-failure',

}

Playwright's trace viewer records every network request and DOM snapshot. When an auth test fails, open the trace and check the Network tab for 401s and unexpected 302 redirects. Check the Application tab for missing or expired cookies. This tells you more than a stack trace ever will.

Isolate Stale State

If an auth test fails and you suspect stale state from a previous test, run it with a clean slate:

import { test } from "@playwright/test";

test.use({ storageState: { cookies: [], origins: [] } });

test("login from scratch", async ({ page }) => {

await page.goto("/login");

// If this passes but the normal test fails,

// the problem is leftover state from your setup

});

This resets all cookies and storage, giving you a clean browser context. If the test passes from scratch but fails with the stored state, the problem is in your storageState file or the session it references.

Log Auth State at Each Step

For auth flows with multiple steps (login, redirect, token exchange, session creation), add logging so you can see where things diverge between local and CI:

setup("authenticate", async ({ page }) => {

await page.goto("/login");

await page.getByLabel("Email").fill(process.env.TEST_USER_EMAIL);

await page.getByLabel("Password").fill(process.env.TEST_USER_PASSWORD);

await page.getByRole("button", { name: "Sign in" }).click();

await page.waitForURL("/dashboard");

const cookies = await page.context().cookies();

console.log(`Auth cookies: ${cookies.map((c) => c.name).join(", ")}`);

console.log(`Current URL: ${page.url()}`);

await page.context().storageState({ path: authFile });

});

Failures that appear only in CI almost always stem from environment differences: misconfigured API endpoints, missing OAuth credentials, timezone offsets that change token expiry, or cookie domain mismatches. These logs are where those differences become visible.

Currents tracks auth failures across runs and distinguishes real flakiness from failures caused by state leakage, so you can spot recurring patterns without digging through CI logs manually.

Integration with CI/CD and Secrets Management

Auth tests that pass locally and fail in CI usually trace to credentials that exist on your machine but were never configured in the pipeline.

Secrets Injection

Sensitive values should never live in artifacts or source control. Use the built-in secret injection mechanisms: GitHub Actions encrypted secrets, GitLab CI masked variables, or the Jenkins Credentials Plugin. HashiCorp Vault strengthens this by issuing credentials only when a specific pipeline stage requests them and revoking them when that stage ends.

Dynamic Credential Creation

Create short-lived credentials at the start of a CI run and remove them at the end. Vault can issue a database password valid for one hour instead of relying on long-lived credentials stored in your CI platform. Credentials that exist only for the duration of a pipeline run have no exposure window between runs.

Handling Auth Expiry During Long CI Runs

Long CI runs (30+ minutes for large suites) can outlive your token TTLs. The auth setup succeeds, but tests later in the run fail with 401s because the session expired. Two approaches:

Use project.outputDir for auto-cleanup. Write auth files under testProject.outputDir instead of a fixed path. Playwright cleans this directory before each run, so every run starts with a fresh login. This is the simplest fix if your tokens are short-lived.

Check token validity before use. Build a worker-scoped fixture that validates the stored auth state at the start of each worker and re-authenticates if expired:

// playwright/fixtures.ts

import { test as base } from "@playwright/test";

import fs from "fs";

import path from "path";

export const test = base.extend<{}, { validatedAuth: string }>({

storageState: ({ validatedAuth }, use) => use(validatedAuth),

validatedAuth: [

async ({ browser, playwright }, use) => {

const authFile = path.resolve(

test.info().project.outputDir,

".auth/user.json",

);

if (fs.existsSync(authFile)) {

const context = await playwright.request.newContext({

storageState: authFile,

});

const check = await context.get("/api/me");

await context.dispose();

if (check.ok()) {

await use(authFile);

return;

}

}

const page = await browser.newPage({ storageState: undefined });

await page.goto("/login");

await page.getByLabel("Email").fill(process.env.TEST_USER_EMAIL);

await page.getByLabel("Password").fill(process.env.TEST_USER_PASSWORD);

await page.getByRole("button", { name: "Sign in" }).click();

await page.waitForURL("/dashboard");

const dir = path.dirname(authFile);

if (!fs.existsSync(dir)) fs.mkdirSync(dir, { recursive: true });

await page.context().storageState({ path: authFile });

await page.close();

await use(authFile);

},

{ scope: "worker" },

],

});

Credential rotation itself should be handled outside the test suite. Your tests consume credentials when provided. The rotation policies and expiration rules belong to the secrets management system.

Compliance and Security

Auth test infrastructure falls within the compliance boundary. Auditors don't separate production systems from test systems. A storageState file with a valid session token, committed to a repo and forgotten, is a reportable incident under SOC 2 and GDPR. ISO 27002 Control 8.31 explicitly requires separating development, test, and production environments.

The numbers make this concrete. The 2025 Verizon Data Breach Investigations Report found that GitLab tokens made up 50% of leaked CI/CD secrets across public repositories. The median time to remediate secrets leaked on GitHub was 94 days. If you handle sensitive data, your auth flows should align with SOC 2, ISO 27001, and GDPR.

Auditing Your Test Suite for Leaked Auth State

Knowing the rules (don't commit credentials, don't use production accounts in tests) isn't the hard part. The hard part is finding the violations that already exist. Run these checks on any suite that's been around for more than a few months.

Scan git history for committed auth files. Files deleted from HEAD still exist in the repo history. Check whether storageState files or auth directories were ever committed:

git log --all --diff-filter=A -- 'playwright/.auth/'

git log -p --all -S 'storageState' -- '*.json'

If you find matches, the credentials may still be valid. Rotate them, then clean the history with git filter-repo or BFG Repo-Cleaner.

Verify .gitignore coverage. Confirm that your auth directory is actually ignored, not just mentioned in documentation:

git check-ignore -v playwright/.auth/user.json

If there's no output, the path isn't ignored and any future git add . will commit it.

Audit CI artifact retention. Playwright traces contain full network request and response data, including auth cookies and tokens. If your CI pipeline uploads traces, screenshots, or HTML reports as artifacts with 30- or 90-day retention, you're storing credentials in your CI platform. Shorten retention to the minimum you need, or strip Set-Cookie and Authorization headers before upload.

Check for production credentials in test environments. List the environment variables your test suite consumes and confirm none of them work against production. A staging API key that also has production access is a common blind spot, especially in early-stage apps where environments share the same auth provider.

Confirm auth files use testProject.outputDir. Auth files written to a fixed path like playwright/.auth/user.json persist between runs unless you explicitly delete them. Files written under testProject.outputDir are cleaned automatically before each run. Check your setup files and fixtures for hardcoded paths.

Observability

A single failed login in a 200-test suite could signal a broken feature, a leftover session from an earlier test, an expired token, or a misconfigured CI environment. You need to distinguish real regressions from environmental noise, and that requires looking at patterns across runs.

The questions that matter: Are login errors appearing on the same tests each run, or shifting unpredictably? Do groups of tests fail together in ways that suggest shared auth state? Is login time gradually increasing across runs, indicating infrastructure degradation before it becomes a hard failure?

Structured Auth Logging

Add structured output to your auth setup so you can diff local runs against CI runs. Log the fields that actually matter for diagnosing auth failures:

setup("authenticate", async ({ page }) => {

await page.goto("/login");

await page.getByLabel("Email").fill(process.env.TEST_USER_EMAIL);

await page.getByLabel("Password").fill(process.env.TEST_USER_PASSWORD);

await page.getByRole("button", { name: "Sign in" }).click();

await page.waitForURL("/dashboard");

const cookies = await page.context().cookies();

const authCookies = cookies.filter((c) =>

["session", "token", "sid"].some((name) =>

c.name.toLowerCase().includes(name),

),

);

console.log(

JSON.stringify({

event: "auth_setup_complete",

url: page.url(),

workerIndex: test.info().workerIndex,

parallelIndex: test.info().parallelIndex,

cookies: authCookies.map((c) => ({

name: c.name,

domain: c.domain,

expires:

c.expires > 0 ? new Date(c.expires * 1000).toISOString() : "session",

})),

timestamp: new Date().toISOString(),

}),

);

await page.context().storageState({ path: authFile });

});

When a CI auth test fails, pull the logs for that run and compare the cookies and url fields against a passing local run. The differences are usually obvious: a missing cookie, a different domain, an expiry timestamp that's already in the past. Without these fields, you're guessing.

Playwright's debug tools help with individual failures, but for patterns across runs, you need something that aggregates results over time. Currents tracks auth test behavior across executions and surfaces correlated failures without changing your existing test code. If three auth tests started failing together last Thursday, that's a state leakage problem, not three independent bugs.

Join hundreds of teams using Currents.

Trademarks and logos mentioned in this text belong to their respective owners.